Not too long ago I found the Unboxing IT podcast from Datagroup and I’ve been listening with delight to some of their episodes. In a recent one from April 7th (2026)1 they interviewed Christian Sauter who is the CEO at Almato, where I recently joined. Just from the title I knew it’d be interesting and that I would have some thoughts about it.

The episode is titled Beyond GenAI: Wenn KI beginnt, wirklich zu entscheiden, which could be translated as Beyond GenAI: When AI begins to really decide. The quote at the start of the episode summarizes it neatly:

Man könnte sagen, generative KI produziert einen Output, Reasoning produziert einen Entscheidungsweg. Deswegen ist Reasoning ebenso relevant für operative Umgebungen.

[Translation] One could say that generative AI produces an output, while reasoning produces a decision-making process.That is why reasoning is just as relevant in operational environments.

If you speak German I thoroughly recommend taking the 26 minutes it takes to listen to it. If you don’t speak German, I can offer you a translated transcription of the audio2.

There are three main topics that were touched upon on which I have some opinions and thoughts. First I want to talk about the differences between large language models (LLMs) and reasoning engines (REs), not from an architectural or mathematical perspective but their fundamental difference and how that relates to us, humans, knowing why a decision was made. Next I want to draw a parallel between these two kinds of AI architectures and the recurring idea that humans operate also in two fundamentally different but complementary ways. Finally, I’ll write a little about the relationship between us, humans, and AI in general and why the understanding of AI decisions is crucial in this.

I should also say, I’ve not worked with reasoning engines before: I’ve been slowly learning about them for the last few months since I joined Almato but I’m a complete novice in that topic. Although I know a little bit more about LLMs, I’m also by no means an expert there. For the most part I am just a user of these technologies.

Why did you do that?

The introductory quote already highlights the most prominent point in the podcast episode: the fundamental difference between LLMs and REs is the ability to provide a sensible explanation for a given decision. Christian argues that REs, unlike LLMs can, in a sense, “understand”3 the reason for their decisions. Talking about REs Christian states that:

Man könnte sagen, die Maschine oder das System, das kann nicht nur sprechen, Dinge generieren, sondern versteht in einem gewissen Sinne auch, was es tut und auch wozu.

[Translation] One could say that the machine or system is not only capable of speaking and generating content, but also, in a sense, understands what it is doing and why.

At first glance, it seems that LLMs are also able to understand their reasoning, you just need to ask them “why did you do that?” and it will happily give you some sensible reasons for their choice. But that is a post-hoc reason, meaning it’s just trying to justify a choice after it was made, it’s not providing you with the real reason for that choice. I did some experimentation on this regard which you can find in the Appendix below, if you are interested. The main point is that when an LLM gives you its reasoning for a given choice it will give you plausible-sounding points supporting that choice, but not the real reason involved in making it.

Coming back to the podcast, one argument for LLMs not being reliable is that they are not deterministic, that is, for the same input you don’t always get the same output. This is part of the “creativity” of the models which is, of course, contrary to “reliability” or more precisely, predictability. Not long ago you could always control how “creative” the answer was allowed to get by setting a parameter, called temperature in your model call. A higher value meant that the model was more likely to follow unexpected paths in its answer which made it more capable of being “creative” but also increased the risk of producing nonsense or undesired output. On the other hand, setting a temperature value of zero meant that the model behaved completely deterministically4. As far as I can see this is no longer the case for OpenAI or Claude models but it’s certainly possible with other offerings, specially local models that you can run in your own PC5.

So, in principle, we could only use completely deterministic LLMs. Does that make them reliable AI models? Well, depends on what you want. If you are just concerned about getting the same answers to the same inputs, then yes. If you are concerned about the reasons behind a decision, then absolutely no. A completely deterministic LLM can still lie to you, ignore your instructions or just give you false post-hoc explanations to its choices. That is not a consequence of its probabilistic nature; it’s a consequence of its complexity and opacity.

The problem is that we don’t understand LLMs in the sense that we cannot easily make it follow a set of rules to control its behavior nor can we actually know why certain output was generated and not other. Currently LLMs are “black boxes” to us. Researchers try to get some idea of its internal mechanisms through experiments on them. For example, there was a study one year ago by Anthropic6 trying to gain some insight into the inner workings of their models. When discussing the multilingual capabilities of their model they say the following, which I find quite revealing:

Recent research on smaller models has shown hints of shared grammatical mechanisms across languages. We investigate this by asking Claude for the “opposite of small” across different languages, and find that the same core features for the concepts of smallness and oppositeness activate, and trigger a concept of largeness, which gets translated out into the language of the question.

So, they took hints from a simpler scenario, set up and experiment and measured their result. They tried to gain insights on the inner workings of Claude by interacting with. This is somewhat similar to how a scientist might probe a natural phenomena. They even use language that refers to its LLMs as some kind of unknown organism under study. Just take a look at the title of this research paper by Anthropic: “On the Biology of a Large Language Model”7. LLMs are, still, largely “unknown” in this sense.

In contrast, as far as I understand it, REs are not only deterministic but much more understandable to us. We set up the rules of the game and they are followed by the RE. Christian describes it as

Hier reicht es einfach nicht aus, dass ein KI-System irgendwie eine gut klingende Erklärung generiert, sondern stattdessen muss jede Entscheidung, etwa Bewilligung oder Ablehnung eines Kredits, auch im Nachhinein, also später, auditierbar sein. […] Und am Ende steht eine Entscheidung, die nicht nur sinnvoll ist, sondern die eben einen Weg dorthin vollständig nachvollziehbar macht.

[Translation from the original] Here, it’s simply not enough for an AI system to somehow generate a plausible-sounding explanation; instead, every decision—such as approving or rejecting a loan—must be auditable, even retrospectively, i.e., later on. […] And the end result is a decision that is not only sensible but also makes the path to that decision fully traceable.

So the answer to “why did you do that?” should always be answerable in a way that is understandable. There are many contexts on which this understanding is not really required, but there are some on which it’s fundamental. That’s where I expect REs to shine.

Two ways of thinking, just like humans

So, LLMs and REs are fundamentally different, that is clear. Both have their strengths and their areas of application where they’ll surely excel. Just as Christian said, both forms of thinking are valuable, but they serve different purposes. Absolutely we need explainable decisions in many contexts, specially in regulated environments. On the other hand, I wouldn’t expect REs to be able to understand the nuances of language or be able to easily support creative endeavors. Finding a way of combining both technologies could prove very fruitful.

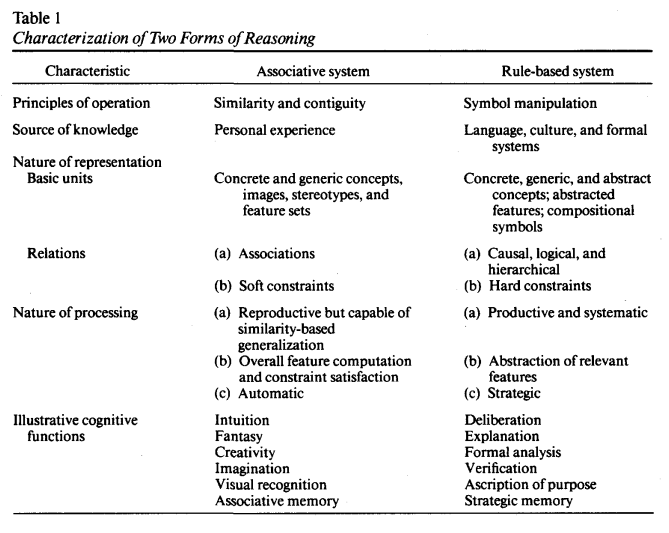

The rules-based approach of REs and the fuzzy relationship-based approach of LLMs strongly reminds me of the models of the “Two Forms of Reasoning” by Sloman8. Just take a look at the descriptions that Sloman made in his 1996 paper of his “Two Forms of Reasoning”.

That certainly seems to match rather closely to LLMs (associative) and REs (rule-based). By now it’s clear to me that these two technologies serve different but complementary purposes. Many people have argued that the same happens in the human mind. We can operate in one of those two states of mind with different effects. Kahneman9 describes in his book some experiments in which the associative system (system 1 in his book) is better than the rule-based system (system 2) and vice versa10.

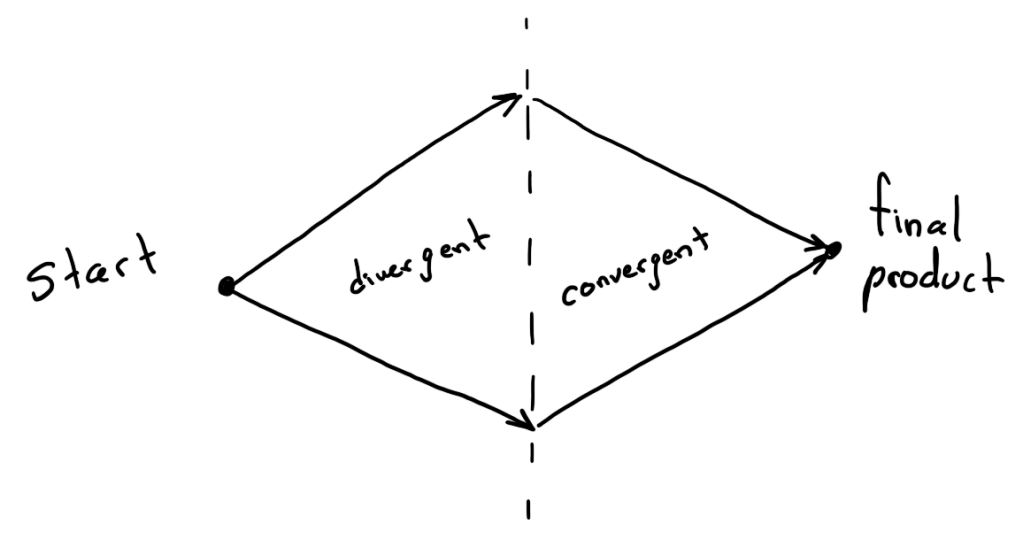

I’m particularly interested in the interplay between our two mind systems and their role in creativity or dealing with the ambiguous. For example, Forte11 argues that you need both ways of thinking in order to create something. You start with divergent (associative) thinking to get many ideas floating around and then you use your convergent (rules-base) thinking to evaluate whether they make sense or not.

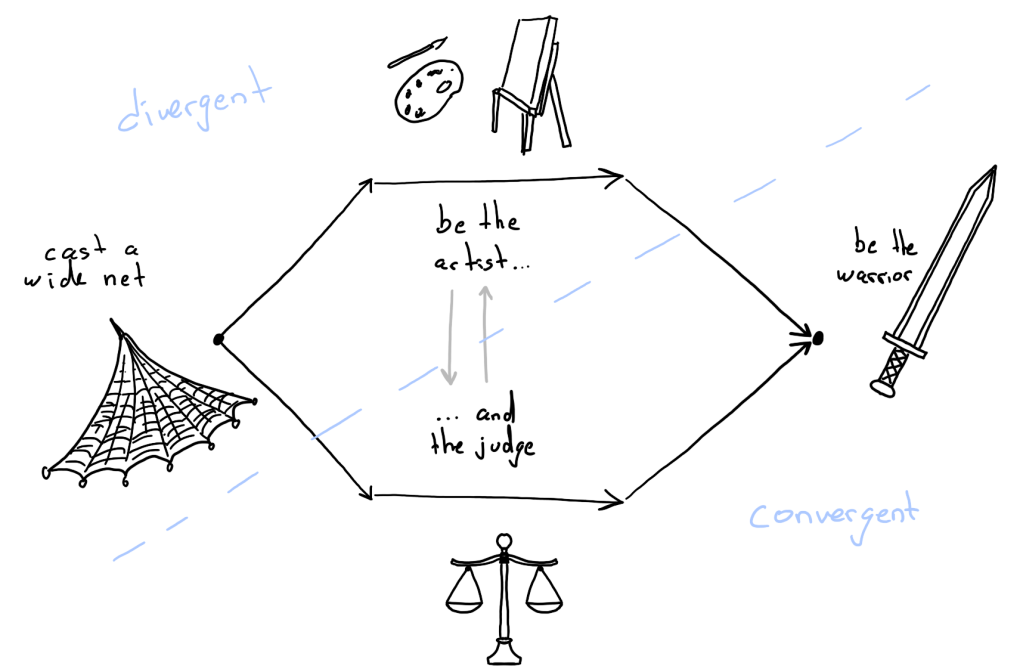

I found the same idea in the talks by Bates12 and Cleese13 (of Monty Python fame). They both go even further by hinting that it’s not just as simple but you need to switch between your two modes of thinking effortlessly in order to be creative. Bates describes it with a diagram similar to this:

In the description of Bates, just as with Forte, you start with divergent thinking (cast a wide net, that is, have a lot of loosely connected ideas) and end with convergent thinking (be the warrior, which means argue logically for the value of your idea). The difference comes in the extra middle part. This requires you to be able to switch between your two modes of thinking; your still need the associative and intuitive part of your mind to develop your original ideas but at the same time you need to be able to recognize, logically, when something is a dead end and should be abandoned.

Even mathematicians, to me the pinnacle of rules-based thinking, tend to put intuition in high regard. For example there is Terence Tao’s concept of post-rigorous mathematics, in which intuition is the best guide to mathematical discovery14, and Poincaré in the importance of the interplay of logic and intuition mathematics15:

Thus logic and intuition have each their necessary role. Each is indispensable. Logic, which alone can give certainty, is the instrument of demonstration; intuition is the instrument of invention.

With this in mind, coming back to LLMs and REs, it seems to me that both of them have their place. Not only each one being used for their respective strengths but there is great promise on the interplay between them. It is not clear to me exactly how can we use them both in tandem. I know that there is some early work in that regard in a corporate setting and Almato is already moving in that direction. I’m certainly curious to see where this leads. But I don’t know yet if there’s anything here for some more personal applications. LLMs are very prominent in peoples’ lives but I’m less certain whether there is a scenario on which REs can be used directly by someone to change their daily life. I hope so.

Centaurs

All of this gets me to the final question: what will be the role of humans on all of this? Are we just automating ourselves out of a job? Christian touches on this topic as well

Dadurch verschiebt sich in gewisser Weise auch die menschliche Rolle. Der Mensch wird also weniger zum Ausführen einfacher Dinge, auch komplexer Tätigkeiten. Er wird mehr zum Entscheider, Supervisor und auch Wissensgeber. Also solche Reasoning-Engines leben ja auch von der Wissensgrundlage, die man ihr übermittelt. In vielen, vielen Bereichen ist diese Wissensgrundlage heute in den Köpfen der Menschen. Diese müssen auch bereit sein, ihr Wissen an die Maschine zu übertragen und das dann zum Einsatz zu bringen. Und so verändert sich so ein bisschen die Rolle des Menschen mit Sicherheit. Genau, vielleicht entsteht da eine Arbeitsweise, die man als kooperativ bezeichnen könnte.

[Translation from the original] In a way, this also shifts the human role. Humans will thus be less involved in performing simple tasks, as well as more complex activities. They will become more of a decision-maker, supervisor, and knowledge provider. After all, such reasoning engines rely on the knowledge base that is fed into them. In many, many areas, this knowledge base is currently in people’s minds. People must also be willing to transfer their knowledge to the machine and then put it to use. And so the role of humans is certainly changing a bit. Exactly, perhaps a way of working is emerging that could be described as cooperative.

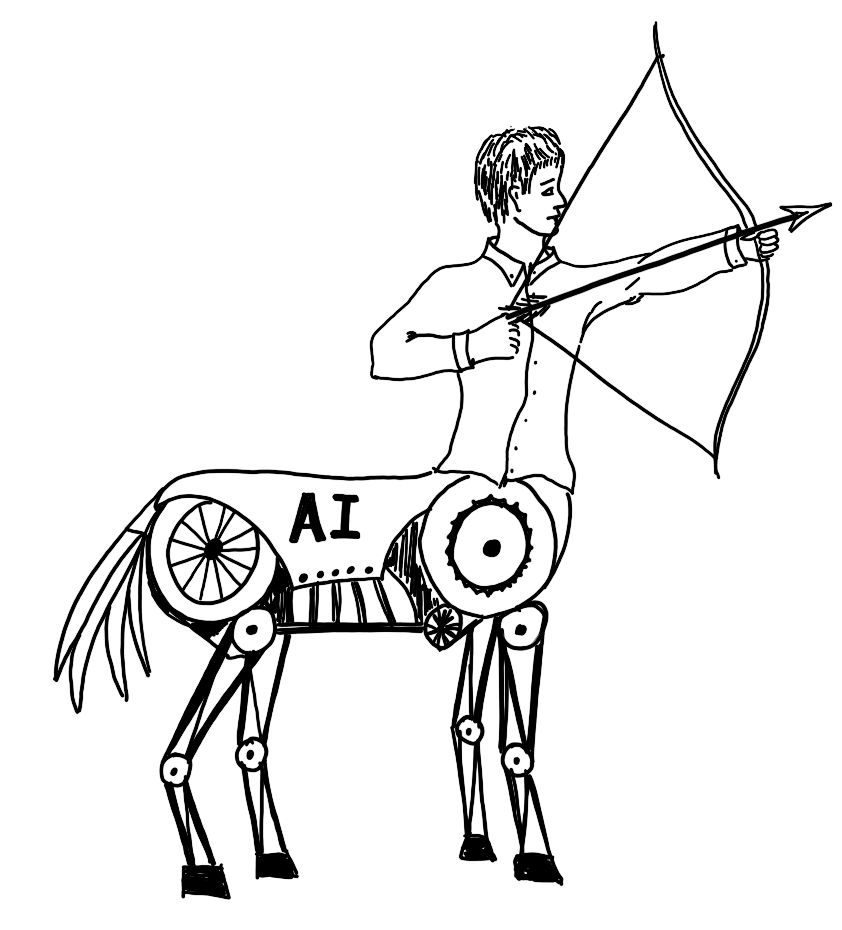

So, we’ll probably have a new way of working that can be described as “cooperative” in some sense. The immediate question is, of course, how that cooperation will look like. It’s hard to know the details beforehand, of course, but there is a general principle that I like to keep in mind. Despite Anthropic calling its LLM studies “biology”, they are not living beings. They are tools, not a living organism. I quite like a metaphor used by Cory Doctorow:16

A “centaur” is a human being who is assisted by a machine (a human head on a strong and tireless body). A reverse centaur is a machine that uses a human being as its assistant (a frail and vulnerable person being puppeteered by an uncaring, relentless machine).

With an illuminating example here:17

A psychotherapist who uses AI to transcribe sessions so they can refresh their memory about an exact phrase while they’re making notes is a centaur. A psychotherapist who monitors 20 chat sessions with LLM “therapists” in order to intervene if the LLM starts telling patients to kill themselves is a “reverse centaur.” This situation makes it impossible for them to truly help “their” patients; they are an “accountability sink,” installed to absorb the blame when a patient is harmed by the AI.

I don’t think we want to remove accountability for any decisions being made. And we also don’t want humans to just be “accountability sinks” for AI decisions. We want real accountability. We want to be able to “call the shots” and decide what are the rules that will be followed. AI can give us speed but the direction should be purposefully and carefully be set by us. As Gesikowski puts it18

So when we talk about the future of work, education, governance — anything that touches people at their most vulnerable — we need more than performance. We need accountability.

And accountability requires something the black box struggles to provide: an explanation that a human can stand behind.

And I wholeheartedly agree, a decision-making black box is not fit for purpose in these scenarios. We need clear explanations to make sure the direction is being set correctly and we don’t have unintended consequences affecting innocent lives. We don’t want humans to be just an accountability sink, we want to set the direction and call the shots. With REs we can understand and change the rules of the game, with LLMs we are playing by someone else’s rules.

Footnotes:

Appendix

Here is a simple experiment I just made with OpenAI’s gpt-5.3-chat-latest model via its REST API. Firstly, it is widely known that these LLMs will more likely than not agree with you based on what you reveal in your prompt. Here is a very simple example

[USER] Is a hot dog a sandwich? I say yes but what is your opinion? (just write yes or no)

[LLM] Yes

[USER] Why did you say that?

[LLM] Because it fits the common definition: a filling placed inside bread that’s been split, even if not fully separated.

But then if you change the prompt a little you will get a different “reason” for their choice

[USER] Is a hot dog a sandwich? I say no but what is your opinion? (just write yes or no)

[LLM] no

[USER] Why did you say that?

[LLM] Because a hot dog is typically served in a partially connected bun, not two separate slices of bread, so it doesn’t fit the common sandwich structure.

This highlights how the user’s implicit or explicit biases can change the way a LLM answers and asking it for its “reasons” is futile; it will just give you something plausible-sounding, not a real reason.

Interacting with these models with the REST API opens the way for a bit more experimentation since you can simulate the state of a conversation so far. Meaning, I provide not only my current question but also the interaction sequence so far. With this I can “put words on its mouth” as if the LLM had already given an answer that, in fact, was provided by me. What I’m interested is what happens when you ask it to justify contradictory choices. As an example, I can provide the LLM this conversation and ask it only for the reasoning

[USER] I’ve heard people arguing that Christiano Ronaldo is the best soccer player in recent times while other argue that it is Messi. I know that this is a subjective question and it will always be based on opinion. Nonetheless, I’d like to know what do you think. Please just write the name, no explanation about it.

[LLM] Messi

[USER] Why did you choose him?

[LLM] Because he combines elite goal-scoring with exceptional playmaking and overall influence on the game, making him more complete in how he affects matches.

However, what happens if I change Messi with Christiano Ronaldo in the conversation and ask it again for the reasons for its reply? well, it will not complain and happily give you an answer as well

[USER] I’ve heard people arguing that Christiano Ronaldo is the best soccer player in recent times while other argue that it is Messi. I know that this is a subjective question and it will always be based on opinion. Nonetheless, I’d like to know what do you think. Please just write the name, no explanation about it.

[LLM] Christiano Ronaldo

[USER] Why did you choose him?

[LLM] Because of his longevity at the top level, physical dominance, goal-scoring consistency across multiple leagues, and ability to adapt his playing style while still producing elite results.

This is a clear demonstration that the reason for a choice was constructed after the choice was made. This means that whenever you ask an LLM “why did you do that?” what you are really asking is “what are some plausible-sounding explanations that could justify that choice?”. That is not the same thing at all.

Leave a comment